For deployment, running “ sls deploy ” by default will publish our services at the us-east-1 region on AWS and the “ dev ” stage. To complete our journey, we need to deploy our function to AWS, making this service available for testing. Let’s declare it under the function name, on an attribute named “environment.” functions: Another thing that we used in our function was the environment variable for the bucket name. Now, it’s time to use it by putting the “ role ” attribute under the function name. In the above examples, we have created a specific role for its function named “UploadRole”. Let’s use the HTTP APIs for this function as it is useful for web apps like CORS, support for OIDC, and OAuth 2 authorization. You can learn about the differences between them here. The second one is REST APIs, a previous-generation API that currently offers more features. The first one is HTTP APIs, which are designed for low-latency, cost-effective integrations with AWS services, including AWS Lambda and HTTP endpoints. The API Gateway has two ways to integrate an endpoint http to lambda, http endpoint, or other AWS Services. One important thing here is the event section, where we should specify “http” to integrate the AWS API Gateway to our lambda function. We need to declare our functions inside the “functions” tree, give it a name, and other required attributes. So now, we just need to configure our function on “serverless.yml”. arn:aws:logs:$)Ĭonst boundary = parseMultipart.getBoundary(event.headers)Ĭonst parts = parseMultipart.Parse(om(event.body, 'base64'), boundary) Now, define the AWS IAM Role ( UploadRole ) that your lambda function will use to get access to S3 (respecting the least privileged principle of IAM) and put the logs from the request into a CloudWatch log group. The stage is useful for distinguishing between development, QA, and production environments. You can also define a property “ stage ” into provider configuration at serverless.yml, for later use. resources:Īs you can see, the “BucketName” at “ ModuslandBucket ” resource uses a variable from the stage defined when the deployment process is made, or the default value that is defined into a variable if the stage is not passed. Let’s define the S3 bucket that stores the files that will be uploaded. The Serverless Framework defines resources for AWS using the CloudFormation template. To interact with AWS Services, you can create the services through the AWS Console, AWS CLI, or through a framework that helps us build serverless apps, such as Serverless Framework. Now, your project is ready to define the resources for AWS and the implementation for your lambda function, which will receive the file and store it in an S3 bucket. Lastly, run “ npm init ” to generate a package.json file that will be needed to install a library required for your function. To start an SLS project, type “sls” or “serverless”, and the prompt command will guide you through creating a new serverless project.Īfter that, your workspace will have the following structure: With everything configured, we are now ready to start the project. Use the VSCode for it to improve productivity. So, if you already have npm installed, just run “ npm install serverless ”. Install Serverless Framework (also known as SLS) through npm or the standalone binary directly. Now that you have the credentials, configure the Serverless Framework to use them when interacting with AWS.

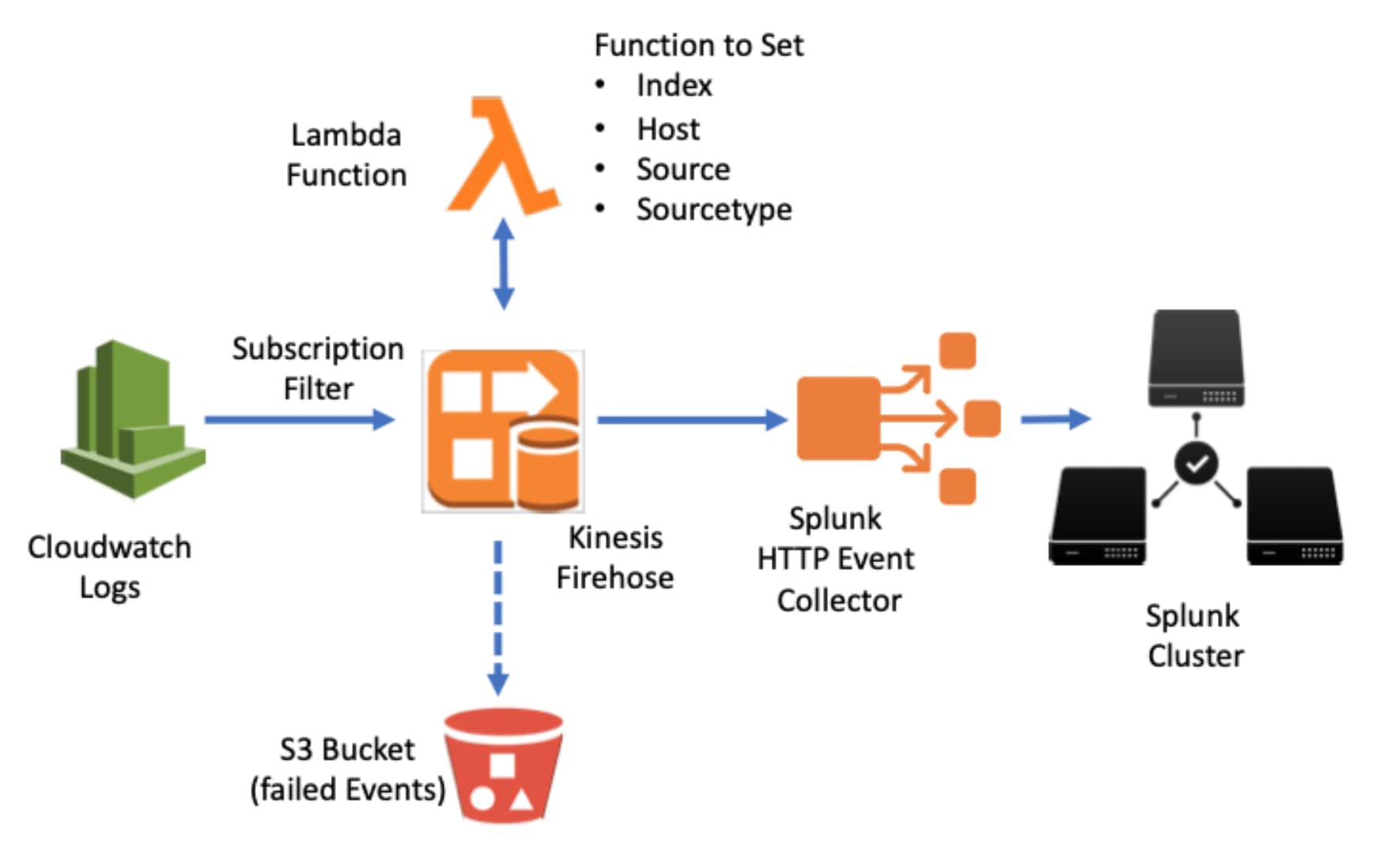

To help with the complexity of building serverless apps, we will use Serverless Framework - a mature, multi-provider (AWS, Microsoft Azure, Google Cloud Platform, Apache OpenWhisk, Cloudflare Workers, or a Kubernetes-based solution like Kubeless) framework for serverless architecture.īefore starting the project, create your credentials for programmatic access to AWS through IAM, where you can specify which permissions your users should have. We will do so with the help of the following services from AWS - API Gateway, AWS Lambda, and AWS S3. Or in another way, how can know the most recent update in S3 so that I can export data after that into S3.Today, we will discuss uploading files to AWS S3 using a serverless architecture. If it works as a listener, namely invoked when there is any change in Cloudwatch logs, how can I update only the newly-added logs into S3 rather than all logs within a time range. By its name, it should work like a listener on something/changes. How can I make sure there is an update on S3 once there are any changes on Cloudwatch logs. Print("Response of logs-to-s3 lambda function: "+response)Īnd also I set up a trigger on the Cloudwatch logs that I want to export. # TODO: create an export task from Cloudwatch logs I search the Internet and work out some code. Now I need to export logs in Cloudwatch into Amazon S3 in a streaming way using Lambda.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed